We are only as good as our tools. As an L&D professional, that’s especially true. You have to constantly revisit and reevaluate the models and frameworks you rely on. Are they still serving you? Or are you serving them?

One tool I see get in the way of the L&D professional all too often is the Kirkpatrick Model.

How the Kirkpatrick Model Works

How the Kirkpatrick Model Works

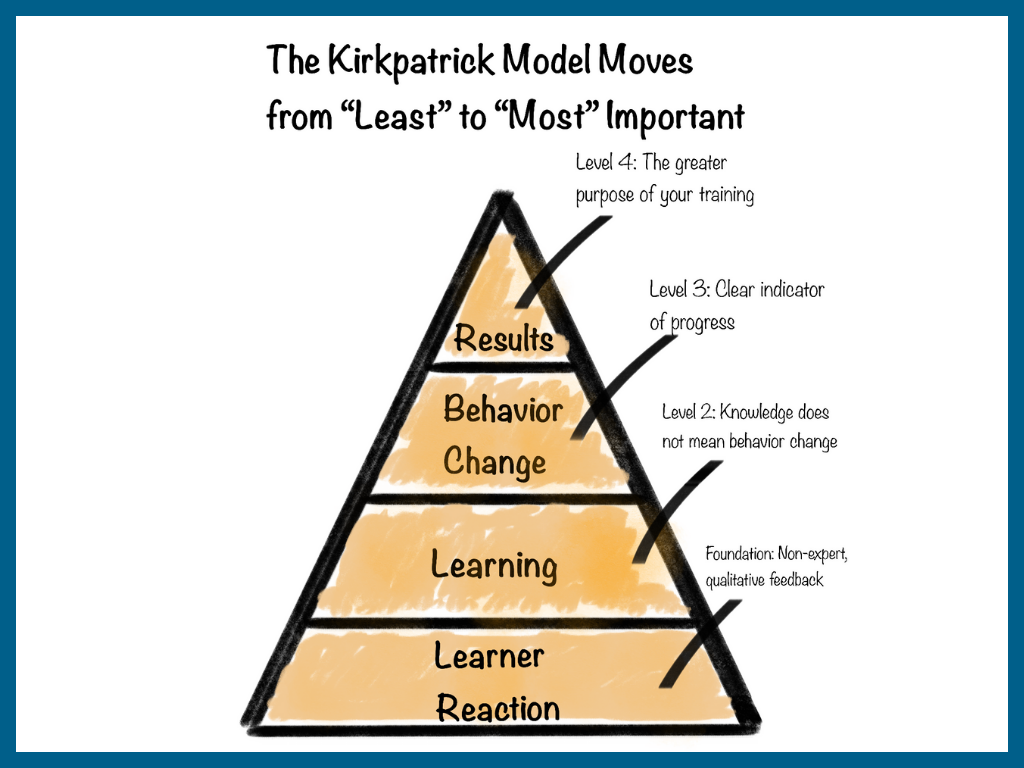

The Kirkpatrick Model, used to evaluate training programs, involves four key levels. The idea is that L&D professionals move through each level of evaluation in order:

Level 1: Learner Reaction – Was the training enjoyable, engaging, and relevant?

Level 2: Learning – Did knowledge transfer occur?

Level 3: Behavior Change – Are participants applying what they learned on the job?

Level 4: Results – Did the training achieve the intended business outcomes?

On the surface, this logic is sound. Reactions, knowledge transfer, behavior change, and results are all useful measures to understand training effectiveness. But, in practice, the structure is less effective than it sounds, and it can even get in the way of useful analysis.

The Problem with the Kirkpatrick Model: Learner Reactions Mislead L&D Professionals

The issue with the Kirkpatrick Model is that it makes “learner reaction” the foundation for evaluating training.

Of course, learner reactions are certainly not useless. But, they don’t offer as much insight into the effectiveness of a program as you might think. After all, learners aren’t experts in gap and need analysis. Learners aren’t instructional designers. And learners aren’t experts in learning theory and behavior change. You are.

And here’s the real kicker: learners typically enjoy the type of learning most that is least likely to change behavior. Imagine, for example, that you’ve identified a population who is experiencing health problems because they’re overweight. Health professionals determine that everyone in this group should get on a treadmill and walk 10,000 steps per day. When you interview those participants after their workout and ask them how it went, they will likely say, “It was boring and grueling.” And when you ask if they would recommend the program, they will likely say no. But, that doesn’t mean the program is ineffective.

“Results” and “Learner Reactions” can be at odds. “It sounds counterintuitive, but learning that leads to actionable shifts in culture or behavior inevitably requires discomfort, dissonance, some conflicting ideas, or unlearning for adults,” said Lora Delgado, Ed.D, CPTD, adjunct professor of Learning and Design in Context at Vanderbilt University. “It’s not as pleasant as, say, listening to a highly engaging and interesting speaker talk about something that does not actually require a subsequent change in anything that individuals do or believe.”

The issues continue as we move up through the levels of the Kirkpatrick Model. “Learning” is Level Two. Knowledge transfer is important. But, the “Knowing-Doing Gap” continues to plague leadership development. Knowledge alone does not equate to behavior change.

That brings us (finally) to Levels Three and Four: Behavior Change and Results. They take the “before” and “after” into account: Have leaders changed their behavior and created new habits, and has that moved the needle as a result? These are the measures that you should really value.

A Better Way to Evaluate Training: Flip the Model, Start with the End in Mind

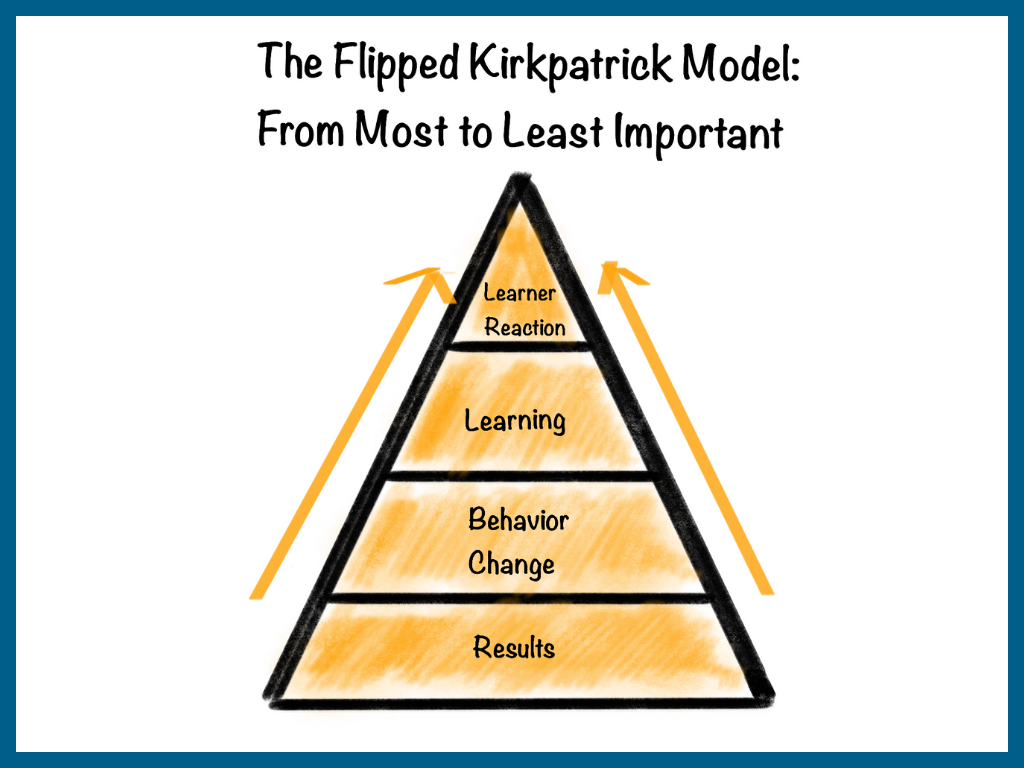

To accurately measure training effectiveness, I propose that we flip the Kirkpatrick Model on its head. Results, not Learner Reactions, should serve as the foundation for evaluation.

Level One, in the flipped model, is Results. Start with the end in mind: the results you want the training to achieve. This is where L&D pros should spend the bulk of their evaluation efforts. What is your program trying to affect? What’s your “why?” Your foundation for evaluation should be the piece you can’t do without—your Results!

For example, a key priority might be to help frontline managers increase team engagement. As a foundational piece of your program, you should measure each manager’s team engagement. And you should have a thoughtful design to check or pulse those results as you go.

Level Two should be Behavior Change. It’s through changes in behavior that you achieve your results.

To continue with the example of frontline managers and team engagement, at this level, you might assess for specific leadership behaviors that are psychometrically proven to drive employee engagement. At this level of evaluation, for example, you might discover that while your overall engagement scores improved (yay!), there are a handful of managers who need a deeper level of support (opportunity!).

Level Three, then, is Learning. Are leaders retaining knowledge and information? Here’s why this can go after Behavior Change. If your leaders apply what they’ve learned, whether or not they remember the content likely won’t be a concern.

That said, if you’re finding on Level Two that a handful of managers are struggling to improve their behavior, you might work your way up the pyramid to Learning. Ask, “How can I create a supporting guide or tool to help them build the right habits?”

Finally, Level Four is Learner Reaction. Gather opinions and feedback on training, but don’t make learner reactions the end-all, be-all measure of effectiveness. In the grand scheme of things, learner reactions are the least meaningful data points in the model.

Flip the Model, Get Results

The flipped Kirkpatrick Model should help more L&D pros zoom out and get a much-needed big-picture view of what they want to affect. Instead of getting caught in the weeds (learners’ reactions), begin with the end in mind. Then, work your way up the pyramid.